Pentesting involves hacking into companies. "Pentesting", or application security, involves analyzing code to find potential security issues in websites and applications. We discuss aspects of each, and where bug bounties fit between them.

The Video

Introduction

First things first, I'm not testing pens (unfortunately). Now... my professional job is "penetration tester" (often shortened to "pentester"), but I'm not really a pentester. I don't like saying that I am one because people tend to think that all I do is hack companies. That's not accurate, so I need to find a new name for what I do. I specifically want to discuss pentesting, "pentesting", and where bug bounties fit in all of this.

Pentesting vs. "Pentesting"

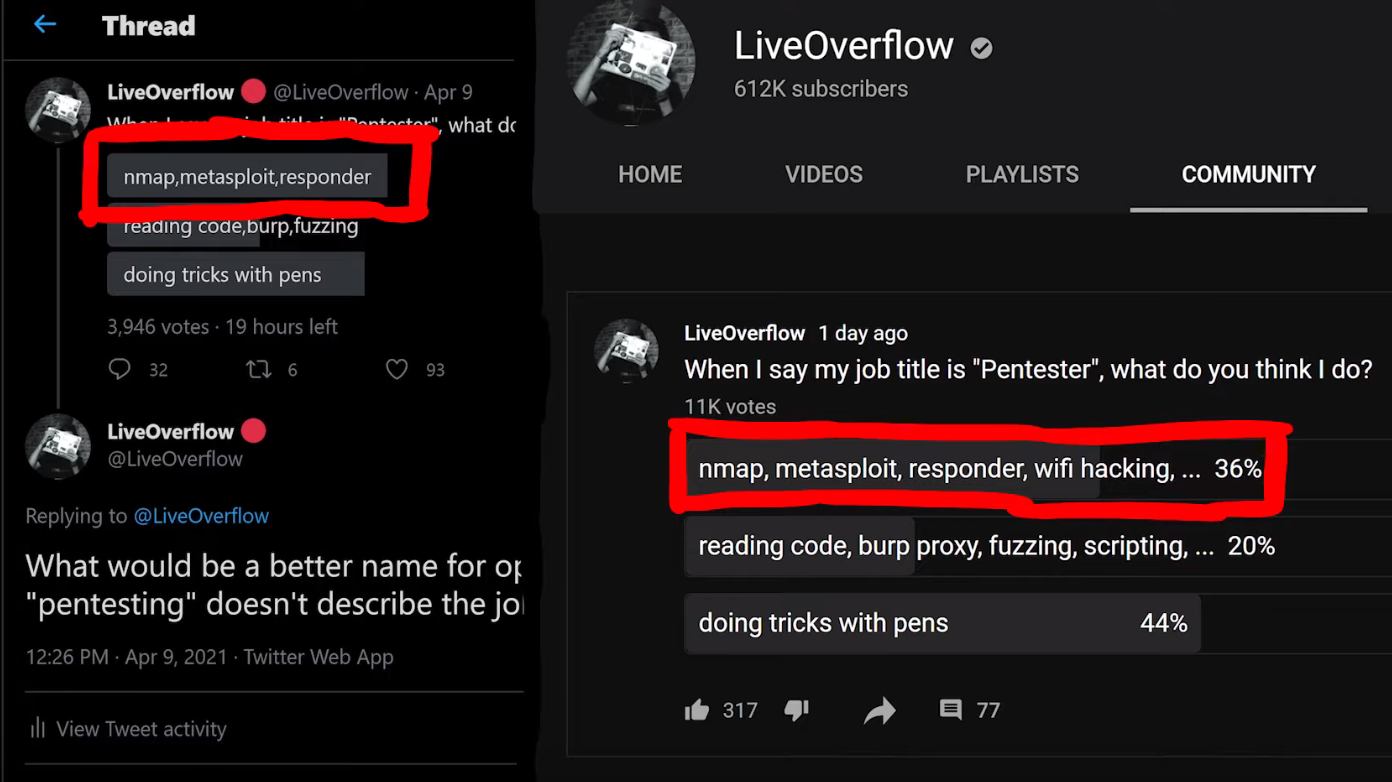

When I say the word "pentesting," what comes to your mind? I asked you via polls on Twitter and YouTube, and this is what you had to say:

nmap, Metasploit, responder, and wifi hacking tools.Most people responded that nmap, Metasploit, responder, and wifi hacking were what they thought about first. This is really the area that red-teaming addresses. Red-teaming is an exercise often used in IT security where a group representing an enemy or threat (the red team) tries to get access to information or gain control of a network that they are not allowed to access. The blue team, conversely, must defend the information or the network by implementing the right safeguards. Red teams can be either groups internal to companies, or outside contractors hired to test a company's security.

An example of red-teaming is a contractor that comes onsite, tries to hack the wifi, then uses nmap to scan the network, find some outdated servers where they can use Metasploit to find some potential exploits, while running responder to win against the blue team... You get the idea. I haven't actually done this, so don't quote me on the methodology.

That is pentesting.

I am a "pentester". I don't actually use tools like this to do my job. Instead, I read code and carry out black-box testing. My job typically consists of testing websites, native applications, or mobile applications, in particular in terms of their security. I look at the code, I run dynamic testing, I use Burp and look at the API requests, I maybe do a bit of fuzzing, too. The whole point is to find new vulnerabilities that were not known before. Then, I gather all of my findings and write an extensive report, which I send to the client. This report contains all of the technical findings, of course, but also how I feel about their website or application.

Different Extremes

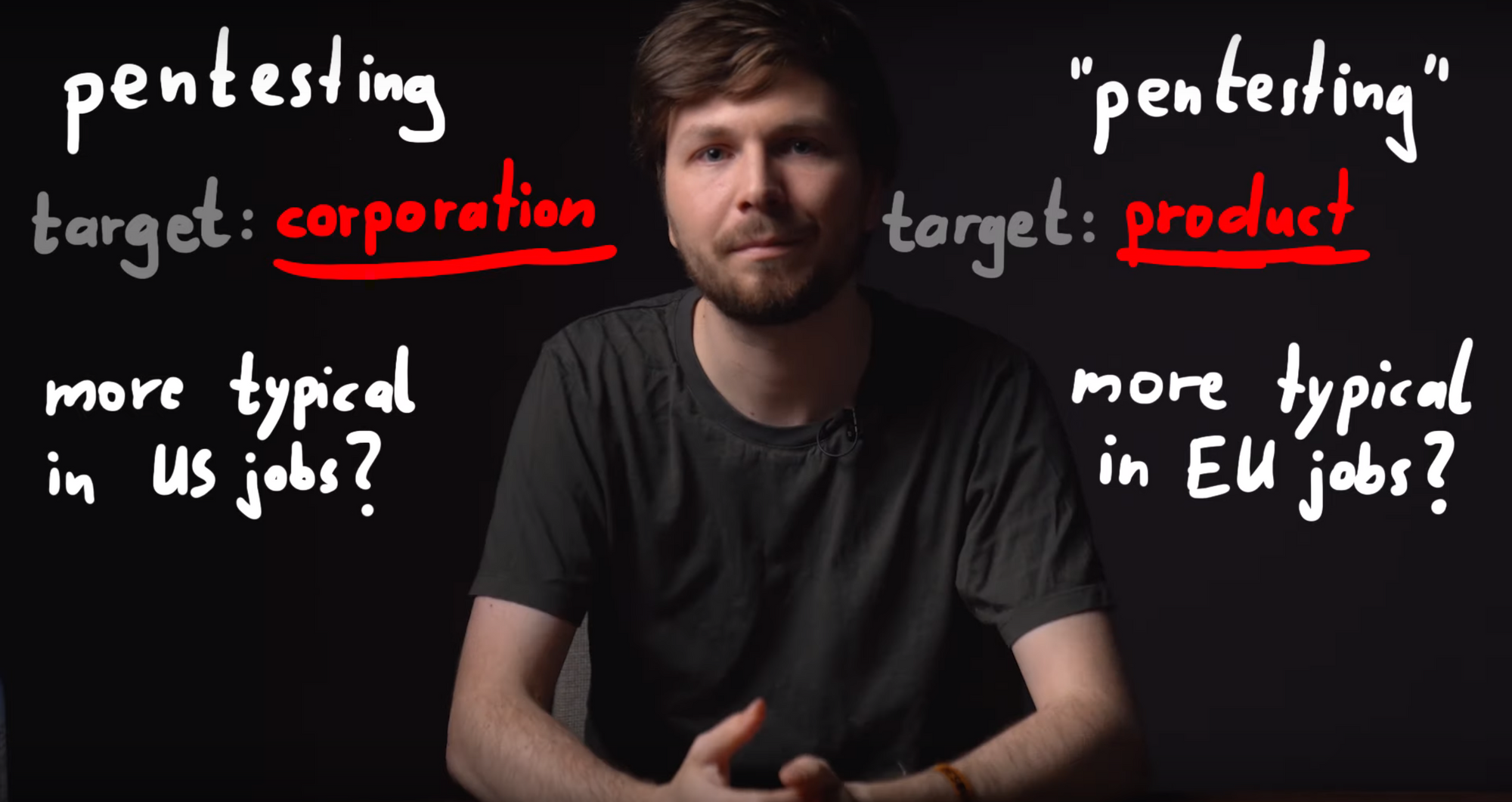

These are not quite the same definition of the word "pentesting", then. In fact, I'd say that both are on either extreme of the scale. Pentesting usually is done at the corporation level. Sometimes, pentesting will involve working with products, but its general scope is typically very broad. Pentesters have at their disposition a variety of different tools to carry out their hacking task. Essentially, pentesting is about hacking a company.

"Pentesting" on the other hand is done at the zoomed-in application or website level. It is instead focused on the nitty-gritty technical details, trying to ascertain whether the customer data is safe, for instance. In fact, sometimes finding a vulnerability in a web application doesn't even affect the company directly, since the service is hosted on third party server equipment outside of the company itself.

It is important to note that companies should carry out both pentesting and "pentesting", either internally or via outsourcing. Having robust networks and websites and/or applications that can be secure and thwart hackers' attacks is essential for any company. This is to say, one is not better than the other; they are complementary, not exclusive.

I tend to gravitate towards "pentesting". I don't really like the corporate side as much as I do the application-level work. Focusing on details is not as easy with the former, whereas with the latter, details are the bread and butter. The reliance on a suite of different tools also isn't something that I am necessarily fond of. This is how I feel about it, and it is in no way a judgment call on the value of pentesting vs. "pentesting".

Across the Pond...

I've also noticed a cultural difference between Europe and the United States when it comes to what people think the word "pentesting" means. I don't mean to exclude the rest of the world; I have simply worked more often with people from those regions, and therefore, I am more attuned to their respective takes on the word. In my (anecdotal) experience, Europeans consider the word "pentesting" to denote the application-level one, whereas Americans would think more readily about red-teaming and corporate pentesting. I obviously haven't conducted an in-depth study on the reason for this difference, I simply have observed it as part of my interactions with people in the IT security sector. That, and the polls you answered point to this conclusion as well.

I Need A New Name

Calling both activities the word "pentesting" is an issue, then. We need a better naming system to better separate them and remove the ambiguity that using the same term poses. Once again, I asked you all what you thought about it; the best answer to me was "application security tester". So, for the remainder of this article, for clarity's sake, I'll call the corporate, high-level pentesting "pentesting", and the application-level vulnerability evaluation "appsec", short for "application security".

By the way, if you look at my YouTube channel, you'll find a video I recently made giving an overview of all the topics that I've covered on my channel. You might notice that I've never made a video on pentesting, whereas I have countless videos on application security. I don't have a single video where I've used Metasploit, I've probably used nmap once, and I most certainly have never brought up active directories, pass the hash, or using wifi hacking tools. It's just not my kind of work. Again, I am not passing judgment here, it's just a preference.

I also do a lot of CTFs (Capture The Flag), they are more fun to me and are a great way to keep up with the industry and learn new things. Some say that CTFs are unrealistic, however. This point of view makes sense if you consider it from the pentesting perspective, where the scope is typically broad, and diving into the intricate details of the programming of an application is generally outside of the red-teaming scope. For an application security tester, CTFs' very detail-oriented nature is an excellent way to dig deep into the technology and hunt for little mistakes that could involve a vulnerability. It is excellent practice for personal methodology and workflow, as well as getting into the mindset of application security.

It seems that with the ambiguity of the "pentesting" term that we initially discussed in this article, we've talked past each other a few times, between the pentesting and appsec sides. When people talk about jobs in IT security, they might mean this side of security, whereas I mean that side of security. This extends from the offensive aspect of IT security to the defensive side, too. For instance, blue-teaming, the opposite of red-teaming, involves analysts in Security Operation Centers (SOCs) or active directory administrators who protect the corporation. On the application security side, there are programmers, software engineers, architects... their role is to protect customers' data, for example. With a more clear "name" for myself, I can better situate myself on the scale between application security and pentesting, and better relate the content that I've been publishing on YouTube to my skillset.

Security Education

Learning tools such as Metasploit, Nessus, responder, wifi hacking, and RAT implants are important skills for the corporate hacking world. There are plenty of resources out there to learn about how to use those tools well. I haven't worked with them though; I find that the pentesting job market is a little limited, and I am more interested in application security anyways. On the appsec side, there are tons of developers. I think that my channel's success is due to the greater applicability of my content for developers. The topics I cover encourage people to be critical of their own code and implementation logic and methods. I also think that CTFs benefit developers the most: you are probably a much better developer if you write code with security in mind. CTFs offer a great way to sharpen the skills you need and see what code works and what code doesn't when it comes to implementing specific programming features.

I also think that being a developer or devops is a lot closer to the application security work that I do than the pentesting side. Personally, I'd much rather do software development with security than doing corporate pentesting as either blue team or red team. Again, it's a preference, both appsec and pentesting have their roles in the IT security industry.

This is all great, but where do bug bounties fall in all of this?

Bug Bounty's Place

I'm pretty sure you expected this, but I'd place bug bounties right in the middle between pentesting and appsec. It borrows aspects from both! You have a large scope to work with, and you approach the bug bounty from the outside. Some of the applications you target may be either products or part of the corporate side. Bug bounties also involve the use of tools and a strong knowledge of technical details and intricacies of programming, so that you can take a deep dive on an API or find some exploits for application-specific vulnerabilities. This is also a place where the CTF mindset is helpful, though you are still approaching the exercise from the outside.

By doing pentesting or appsec in collaboration with a company enables you to have access to white-box knowledge - such as source code or beta software. This saves heaps of time, as you don't need to toil away, trying to bruteforce things such as API endpoints. You just look up all the configured routes and specifically audit the API endpoints. Generally, application security sits a little earlier in the software development pipeline, with testing carried out on a staging or development build before the code is released into production. If all goes well, you catch all the bugs and issues before the product is rolled out to customers. Or, you might report back to the company and tell them where they stand security-wise.

There is no guarantee that software is 100% vulnerability-free. That's why bigger companies such as Google run bug bounty programs. They have their own internal teams auditing code, and it's important to carry out the internal application security work ideally before the production stage, but bugs will be missed... bugs that bug bounty hunters can find at a later date.

Final words

I hope that these thoughts and the resulting discussion helped you to get a better overview of the different areas within the IT security sector. I also hope that it helps you better focus on what you should learn for the job that you are interested in.